The Generative Frontier: Setting the Next Enterprise Intelligence Paradigm

VeloTechna Editorial

Observed on Jan 24, 2026

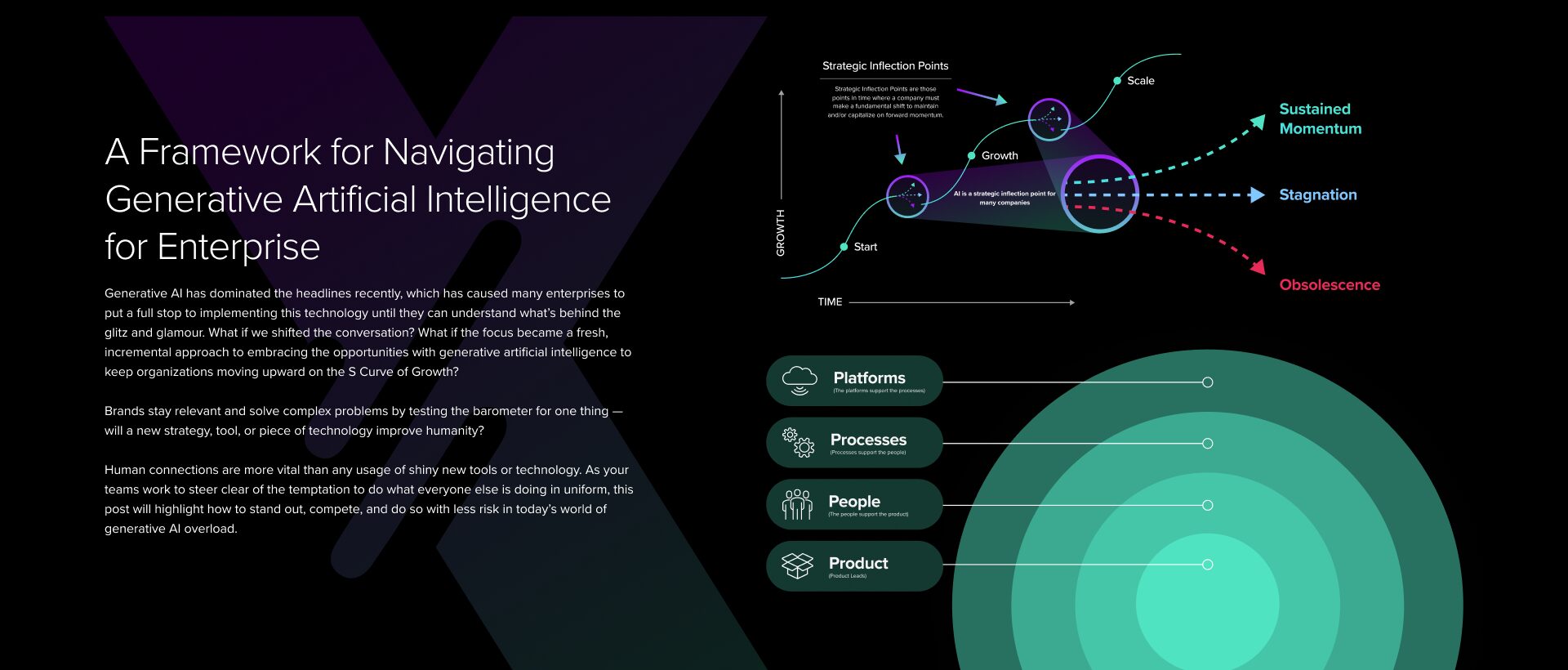

Technical Analysis Visualization

VELOTECHNA, Silicon Valley - The global technology ecosystem is currently facing a tectonic shift, transitioning from the initial generative euphoria artificial intelligence (GenAI) to a phase of tighter structural integration. As companies move beyond the 'proof of concept' stage, the focus shifts to the industrialization of intelligence. This evolution is not just about more sophisticated models, but about sophisticated data orchestration, computing, and agent workflows that are redefining the nature of the digital workforce. Recent industry developments, as highlighted in Source, highlighting the critical point where infrastructure readiness meets implementation strategic.

Agentic System Mechanism

At the heart of this transition is the move from probabilistic chat interfaces to deterministic agent systems. While the first wave of GenAI focused on text and image generation, today's mechanical limitations involve 'Agentic Workflow'. It is a system capable of iterative reasoning, tool use, and multi-step planning. By leveraging Retrieval-Augmented Generation (RAG), organizations base Large Language Models (LLM) on proprietary data, effectively eliminating the risk of hallucinations that previously plagued enterprise adoption. We are seeing a move towards 'Small Language Models' (SLMs) that are tailored for specific vertical tasks, offering higher efficiency and lower latency than other large language models. These mechanisms represent the 'engine room' of productivity improvements in the next decade, where AI no longer just suggests content but executes complex business processes autonomously.

Dominant Players and the Computational Arms Race

The competitive landscape has divided into Hyperscalers and Special Disruptors. Microsoft, through its partnership with OpenAI, continues to lead the way in software layer integration, while Google and Meta Leveraging its wide data moat to enhance open source and proprietary models. However, the real king is still the hardware provider. NVIDIA's dominance in the GPU market has created a bottleneck this forces players like Amazon and Apple to develop their own silicon. This vertical integration is a defensive maneuver to secure the supply chain and optimize costs per inference. Meanwhile, the 'Open Source' movement, led by Meta and Mistral's Llama series, is democratizing access to high-level intelligence, challenging the hegemony of closed models, and forcing the rapid commoditization of raw intelligence tokens.

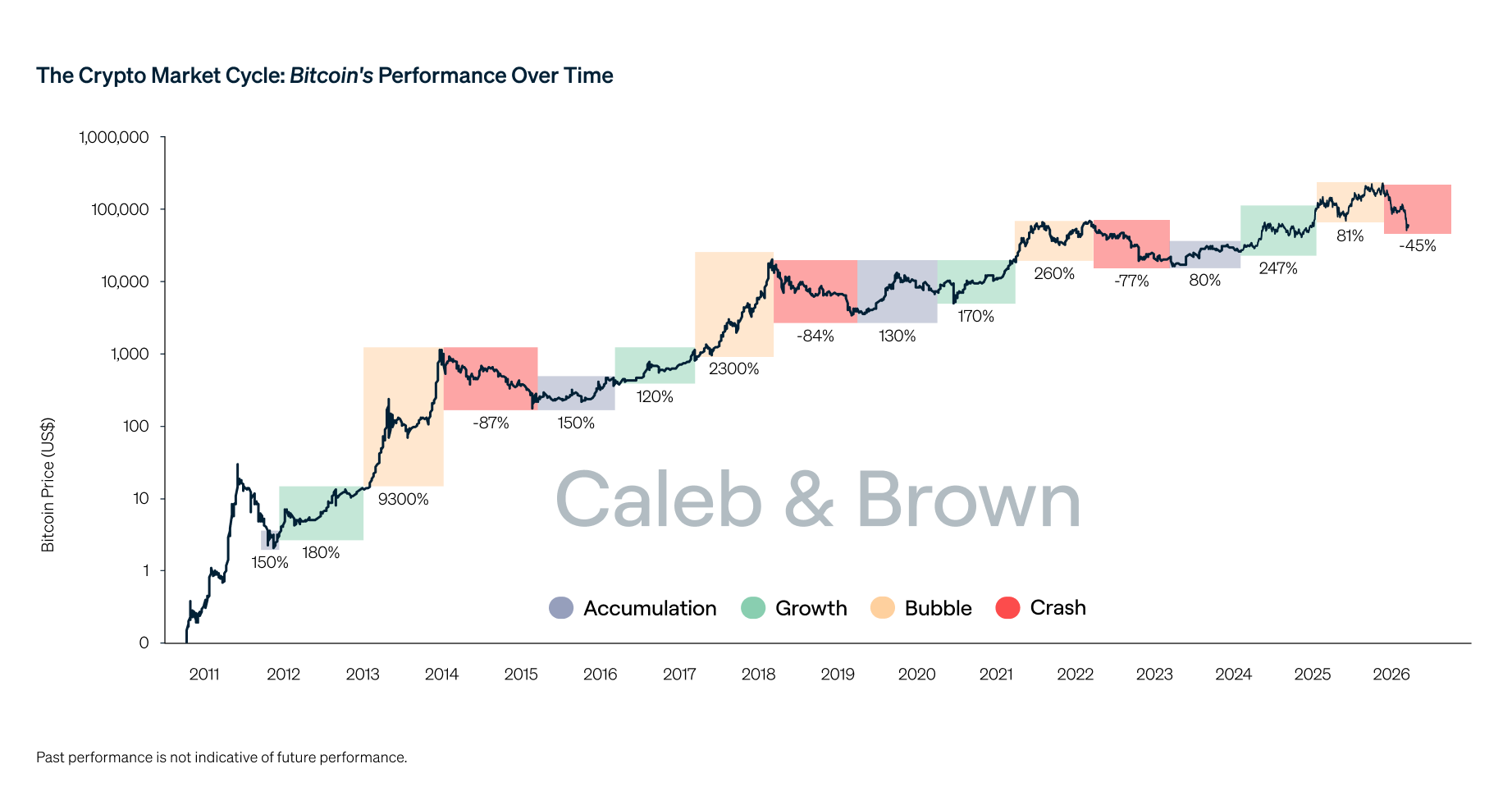

Market Reaction and Economic Volatility

The market reaction to this rapid evolution was a combination of overinvestment and prudent skepticism. Even though capital expenditure (CapEx) in data centers has reached historic highs, investors are starting to demand proof of 'Return on AI' (ROAI). We observed a 'valuation correction' in companies that only provide AI wrappers, while infrastructure and cybersecurity companies that specialize in AI protection experienced unprecedented growth. The market is increasingly rewarding companies that demonstrate operational leverage—the ability to increase revenue without a commensurate increase in headcount—through AI-based automation. This fiscal oversight is sound; it filters out the speculative noise and highlights companies that are fundamentally re-engineering their cost structures through machine intelligence.

Impact & Forecast: 24 Month Horizon

Over the next two years, we expect a transition from human-assisted AI to human-supervised AI networks. By 2026, we predict the emergence of 'Personal AI Operating Systems' that manage individuals' digital lives across devices. In the enterprise sector, the 'Siloed AI' model will be replaced by 'Interoperable Intelligence', i.e. different models from different vendors communicating through standard protocols to solve problems across departments. We also expect a big surge inEdge AI, where processing is done locally on the device, rather than in the cloud, driven by privacy concerns and the need for zero-latency response times in sectors such as autonomous logistics and robotic manufacturing. The regulatory environment will also mature, with the EU AI Law setting global benchmarks for ethical implementation, potentially slowing down some high-risk applications while providing a stable framework for long-term investment.

Conclusion: The era of AI experimentation is over; the era of AI execution has begun. For modern enterprises, the challenge is no longer about choosing the 'best' model, but about building the most robust and scalable AI architecture. Those who successfully integrate this technology into their core DNA will achieve previously unimaginable levels of high efficiency. At VELOTECHNA, we remain convinced that the strategic deployment of intelligence is the greatest competitive advantage of the 21st century. The window for basic adoption is closed; now is the time to take decisive action.

Sponsored

Lanjutkan dengan Word Counter

Ukur kepadatan konten analisis dan reading time.