Pergeseran Paradigma Silikon: Bagaimana AI Terintegrasi Mendefinisikan Ulang Lapisan Sistem Operasi

VeloTechna Editorial

Observed on Jan 24, 2026

Technical Analysis Visualization

VELOTECHNA, Silicon Valley - Sektor teknologi global saat ini sedang menghadapi pergeseran tektonik dalam arsitektur dasarnya. Selama beberapa dekade, hubungan antara perangkat keras dan perangkat lunak relatif dapat diprediksi: perangkat lunak memerlukan lebih banyak sumber daya, dan perangkat keras berevolusi untuk menyediakan sumber daya tersebut. Namun, seperti yang disoroti dalam pergerakan industri baru-baru ini mengenai integrasi mendalam kecerdasan generatif ke dalam proses sistem inti (Sumber), kita sedang menyaksikan akhir dari era komputasi 'Tujuan Umum' dan awal dari 'OS Kognitif'.

Di VELOTECHNA, metrik internal kami menunjukkan bahwa transisi ini bukan hanya pembaruan fitur tetapi rekayasa ulang total pengalaman pengguna. Industri ini beralih dari komputasi reaktif—di mana mesin menunggu masukan pengguna—menuju sistem proaktif dan antisipatif yang memanfaatkan pemrosesan saraf lokal untuk menafsirkan maksud. Pergeseran ini menciptakan perbedaan besar di pasar antara infrastruktur lama dan perangkat keras asli AI.

Baca Selengkapnya:

Samsung

Mekanisme Integrasi Neural

Dasar teknis Revolusi ini terletak pada migrasi dari AI yang bergantung pada Cloud ke Kecerdasan Pada Perangkat. Untuk mencapai persyaratan latensi untuk bantuan OS real-time, produsen menyematkan Neural Processing Unit (NPU) langsung ke dalam silikon. Hal ini memungkinkan sistem operasi menangani token Model Bahasa Besar (LLM) secara lokal, menjaga privasi, dan mengurangi biaya energi besar yang terkait dengan perjalanan pulang pergi pusat data.

Selanjutnya, integrasi tingkat kernel pada model AI ini memungkinkan dilakukannya apa yang kami sebut 'Pengindeksan Semantik'. Tidak seperti sistem file tradisional yang mencari kata kunci, OS generasi berikutnya memahami konteks dokumen, email, dan bahkan panggilan video. Perubahan arsitektur ini memerlukan penulisan ulang menyeluruh mengenai cara memori dialokasikan, karena OS kini harus menyeimbangkan siklus komputasi tradisional dengan tuntutan matematis yang berat dari model berbasis transformator.

Pemain Utama dan Lanskap Kompetitif

Lanskap saat ini didominasi oleh benturan tiga arah antara arsitek OS tradisional, perancang silikon, dan garda depan baru peneliti AI. Microsoft telah mengambil langkah terdepan dalam bidang perusahaan, memanfaatkan kemitraannya dengan OpenAI untuk menyatukan 'Copilot' ke dalam struktur Windows. Langkah ini telah memaksa pesaing untuk mempercepat peta jalan mereka secara signifikan.

Apple, sebaliknya, memanfaatkan integrasi vertikal untuk mengoptimalkan kerangka 'Apple Intelligence' di seluruh chip seri M miliknya, dengan fokus pada sudut pemasaran 'Privacy-First' yang dapat diterima oleh konsumen dengan kekayaan bersih yang tinggi. Sementara itu, Qualcomm dan Intel terlibat dalam persaingan sengit untuk menjadi penyedia silikon standar untuk 'PC AI' kelas baru ini. Peluncuran Snapdragon X Elite baru-baru ini telah membuktikan bahwa arsitektur berbasis ARM bukan lagi sekadar keingintahuan perangkat seluler, namun merupakan ancaman nyata terhadap dominasi x86 di segmen laptop berperforma tinggi.

Reaksi Pasar dan Sentimen Perusahaan

Reaksi pasar merupakan campuran dari investasi besar-besaran dan skeptisisme hati-hati. Meskipun indeks-indeks yang bergerak di bidang teknologi telah memperoleh keuntungan signifikan yang didorong oleh optimisme AI, pejabat pengadaan perusahaan menyatakan kekhawatiran mengenai total biaya kepemilikan (TCO). Meningkatkan armada stasiun kerja global menjadi perangkat keras berkemampuan AI mewakili belanja modal bernilai miliaran dolar bagi perusahaan-perusahaan Fortune 500.

Meskipun ada biaya-biaya yang harus ditanggung, sentimen di kalangan CTO beralih ke arah kebutuhan. Program percontohan awal menunjukkan bahwa sistem operasi yang terintegrasi dengan AI dapat mengurangi overhead administratif hingga 22%, terutama melalui penjadwalan otomatis, sintesis dokumen, dan pembuatan kode. Peningkatan produktivitas ini adalah pendorong utama di balik siklus penyegaran perangkat keras saat ini, yang menurut banyak analis akan menjadi yang paling signifikan sejak transisi ke Windows 10.

Dampak & Prakiraan Analitik 2 Tahun

Dalam 24 bulan ke depan, VELOTECHNA memperkirakan dua fase berbeda dalam evolusi pasar. Pada Tahun 1 (2025), kami mengharapkan fase 'Pemurnian Perangkat Keras'. Perusahaan akan secara agresif menonaktifkan sistem yang tidak memiliki NPU khusus. Kami mengantisipasi bahwa pada Q4 2025, NPU dengan setidaknya 40 TOPS (Triliun Operasi Per Detik) akan menjadi persyaratan masuk minimum untuk perangkat komputasi kelas profesional apa pun.

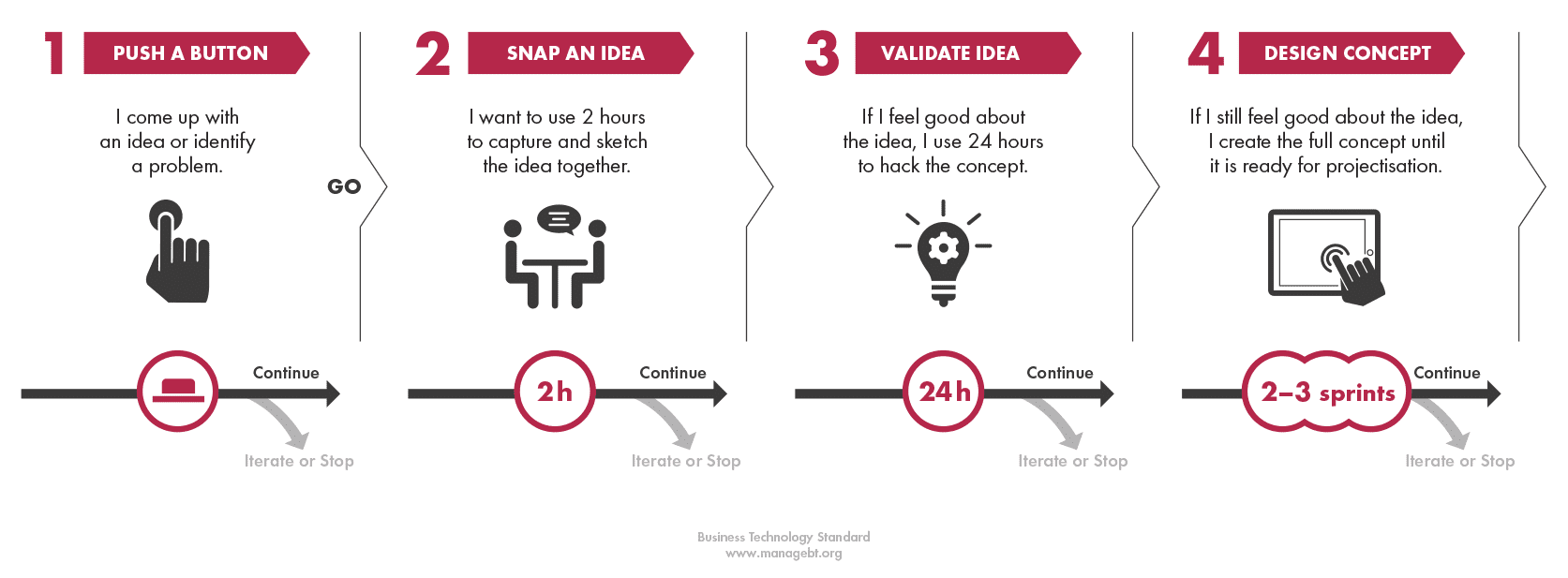

Pada Tahun 2 (2026), kita akan memasuki era 'Agen Otonom'. OS ini akan beralih dari antarmuka obrolan sederhana ke 'Agentik Workflows', di mana komputer dapat secara mandiri menjalankan tugas multi-langkah—seperti mengajukan laporan pengeluaran atau mengatur peta jalan proyek—tanpa pengawasan pengguna yang terus-menerus. Kami memproyeksikan bahwa pada tahun 2027, konsep 'membuka aplikasi' akan mulai memudar, digantikan oleh antarmuka berbasis niat yang lancar, tempat OS mengumpulkan alat-alat yang diperlukan secara real-time berdasarkan tujuan pengguna saat ini.

Kesimpulan

Integrasi AI ke dalam sistem operasi lebih dari sekadar tren; ini adalah gambaran ulang mendasar dari hubungan alat-pengguna. Ketika silikon menjadi lebih pintar dan perangkat lunak menjadi lebih intuitif, gesekan antara pemikiran manusia dan eksekusi digital dengan cepat menghilang. Bagi organisasi, pesannya jelas: dampak dari tetap menggunakan sistem lama akan segera diukur tidak hanya dalam biaya pemeliharaan, namun juga dalam hilangnya kecepatan kompetitif. Masa depan OS bukan lagi soal manajemen; ini tentang kemitraan.

Sponsored

Lanjutkan dengan SEO Page Audit

Audit teknis SEO untuk URL yang kamu analisis.