The Ghost in the Machine: Unraveling the Philosophical Labyrinth of Rational AI

VeloTechna Editorial

Observed on Feb 02, 2026

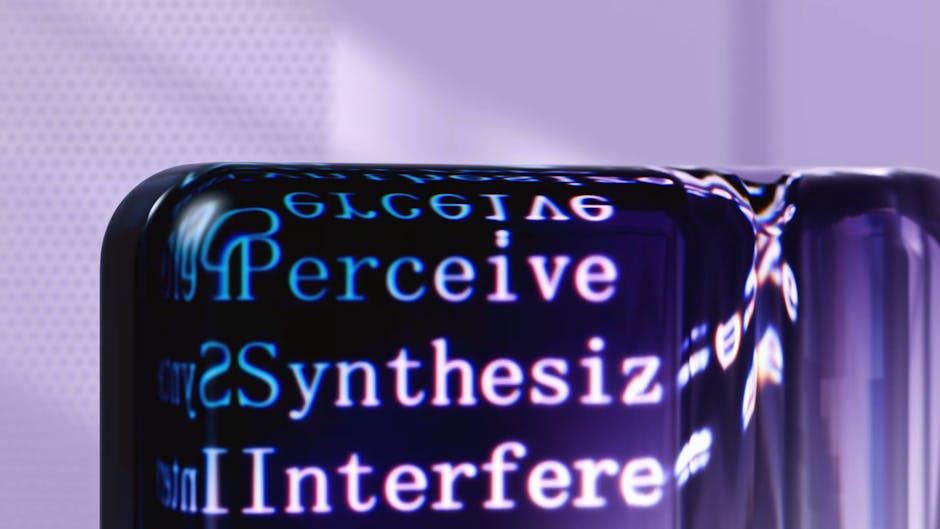

Technical Analysis Visualization

DATELINE: VELOTECHNA, Silicon Valley - As the global race for Artificial General Intelligence (AGI) intensifies, a fundamental question remains unanswered: Can a machine really think, or is it just an architectural simulation think? According to a report fromMIT News, the scientific community is currently grappling with a deep philosophical puzzle regarding the nature of rationality in artificial systems. This investigation goes beyond standard metrics of processing power and data set size, instead delving into the epistemological foundations of how machines 'know' what they claim to know.

Core Conflict: Logic vs. Statistics

According to a report from MIT News, the current generation of Large Language Models (LLM) operates based on a probabilistic prediction paradigm, not formal logic. While these systems can compose poetry or debug code with extraordinary efficiency, they lack a fundamental understanding of the world—a concept that philosophers call 'intentionality'. Technical analysis provided by MIT researchers shows that while AI can follow syntactic rules, it does not necessarily understand the semantics or 'true value' of its output.

Read More:

ChatGPT

The The difference lies in the difference between action rationality and belief rationality. A system may be rational in achieving a mathematical goal (minimizing the loss function), but remain irrational if its internal model of reality is disconnected from empirical truth. This 'black box' problem is not just a technical obstacle; this is a philosophical crisis. As MIT experts point out, the lack of a formal framework for machine rationality means we are essentially building increasingly sophisticated tools without rudders based on logic.

Technical Analysis: The Formal Logic Gap

In technical fields, the challenge is often framed as an 'alignment problem', but the MIT report elevates this to a question of fundamental rationale. Current AI architectures are largely connectionist, meaning they learn through patterns in data. However, human rationality is often characterized by symbolic reasoning—the ability to apply discrete rules to new situations without regard to previous exposure to statistics.

According to a report from MIT News, researchers are investigating whether a hybrid approach—often called neuro-symbolic AI—can bridge this gap. This involves combining the pattern recognition capabilities of neural networks with the strict, rule-based logic of classical AI. Without this synthesis, AI will remain susceptible to 'hallucinations', which are not simply errors, but symptoms of a system that lacks a coherent logical framework to verify its own statements that conflict with the law of non-contradiction.

Industrial Impact: Trust Deficit

The implications of this philosophical conundrum go far beyond the ivory tower, and impact every sector that is currently integrating AI. In high-risk environments such as autonomous vehicle operation, medical diagnosis, and legal decision-making, the requirement of 'rational' decision-making is absolute. If a machine cannot provide rational justification for its actions—a process known as 'explainability'—this will create a liability gap that the current regulatory framework cannot address.

Industry analysts looking at MIT's findings suggest that a 'trust deficit' in AI is directly linked to a lack of perceived rationality. According to a report from MIT News, if we cannot define what 'rational' means for AI, we cannot safely delegate moral or life-critical decisions to these systems. This has had a cooling effect on some enterprise sectors due to unpredictable 'black box' reasons beyond increasing automation efficiency.

VELOTECHNA's Future Forecast

Looking ahead, VELOTECHNA analysts anticipate changes in the AI development roadmap. The industry will likely move away from the 'bigger is better' model training philosophy and towards a 'verifiable reasoning' architecture. According to a report from MIT News, the next frontier will not be determined by the quantity of parameters, but by the quality of the underlying cognitive architecture.

We predict that in the next five years there will be the emergence of 'Epistemic AI'—systems designed with built-in philosophical constraints that prioritize logical consistency over mere statistical probability. This will likely involve a return to formal verification methods, where AI outputs are checked against a set of immutable logical axioms. Additionally, we expect a surge in demand for 'AI Ethicists' trained not only in coding, but also formal logic and epistemology, to ensure that future machines are not only fast, but fundamentally 'reasonable'.

Ultimately, the philosophical conundrum identified by MIT serves as a necessary reality check for the industry. As we reach for the AGI stars, we must ensure that our machines are based on the rational principles that have guided human progress for millennia. Without a foundation of logic, AI is just a sophisticated mirror that reflects our data without understanding our world.

Sponsored

Lanjutkan dengan Keyword Suggestions

Cari keyword turunan dari topik artikel ini.