Perbatasan Generatif: Mengatur Paradigma Intelijen Perusahaan Berikutnya

VeloTechna Editorial

Observed on Jan 24, 2026

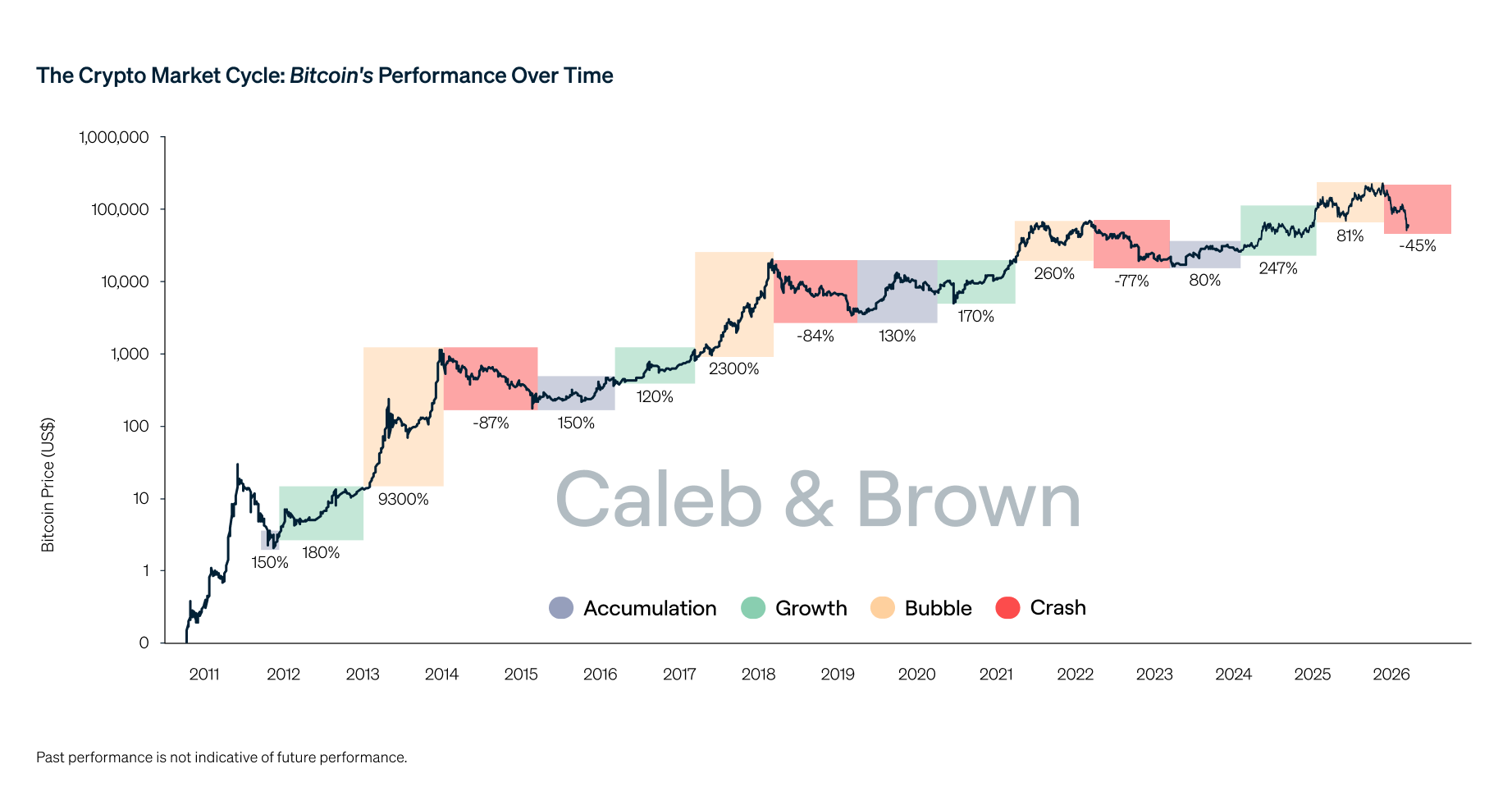

Technical Analysis Visualization

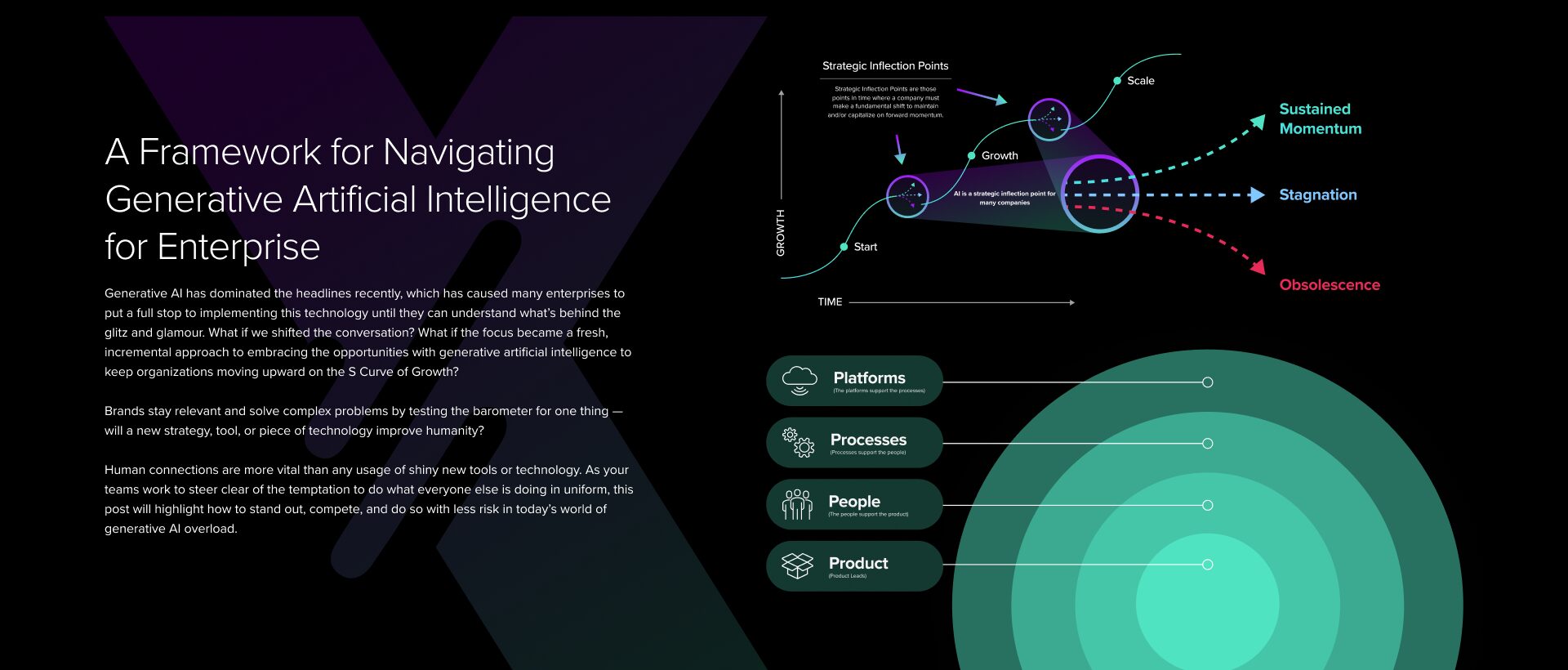

VELOTECHNA, Silicon Valley - Ekosistem teknologi global saat ini sedang menghadapi pergeseran tektonik, bertransisi dari euforia awal generatif kecerdasan buatan (GenAI) ke fase integrasi struktural yang lebih ketat. Ketika perusahaan bergerak melampaui tahap 'pembuktian konsep', fokusnya beralih ke industrialisasi intelijen. Evolusi ini bukan hanya tentang model yang lebih canggih, namun tentang orkestrasi data, komputasi, dan alur kerja agen yang canggih yang mendefinisikan kembali sifat dasar tenaga kerja digital. Perkembangan industri terkini, seperti yang disoroti dalam Sumber, menggarisbawahi titik kritis di mana kesiapan infrastruktur bertemu dengan implementasi strategis.

Mekanisme Sistem Agentik

Inti dari transisi ini adalah peralihan dari antarmuka chat probabilistik ke sistem agen deterministik. Meskipun gelombang pertama GenAI berfokus pada pembuatan teks dan gambar, batasan mekanis saat ini melibatkan 'Alur Kerja Agentik'. Ini adalah sistem yang mampu melakukan penalaran berulang, penggunaan alat, dan perencanaan multi-langkah. Dengan memanfaatkan Retrieval-Augmented Generation (RAG), organisasi mendasarkan Model Bahasa Besar (LLM) pada data kepemilikan, sehingga secara efektif menghilangkan risiko halusinasi yang sebelumnya mengganggu adopsi perusahaan. Kami melihat adanya pergerakan menuju 'Model Bahasa Kecil' (SLM) yang disesuaikan untuk tugas vertikal tertentu, menawarkan efisiensi lebih tinggi dan latensi lebih rendah dibandingkan model bahasa besar lainnya. Mekanisme ini mewakili 'ruang mesin' peningkatan produktivitas pada dekade berikutnya, di mana AI tidak lagi hanya menyarankan konten tetapi menjalankan proses bisnis yang kompleks secara mandiri.

Baca Selengkapnya:

Alphabet Inc

Pemain Dominan dan Perlombaan Senjata Komputasi

Lanskap persaingan telah terbagi menjadi Hyperscaler dan Pengganggu Khusus. Microsoft, melalui kemitraannya dengan OpenAI, terus memimpin dalam integrasi lapisan perangkat lunak, sementara Google dan Meta memanfaatkannya parit data yang luas untuk menyempurnakan model sumber terbuka dan kepemilikan. Namun, raja sejati tetaplah penyedia perangkat keras. Dominasi NVIDIA di pasar GPU telah menciptakan hambatan hal ini memaksa pemain seperti Amazon dan Apple untuk mengembangkan silikon mereka sendiri. Integrasi vertikal ini merupakan manuver defensif untuk mengamankan rantai pasokan dan mengoptimalkan biaya per inferensi. Sementara itu, gerakan 'Sumber Terbuka', yang dipimpin oleh seri Llama dari Meta dan Mistral, mendemokratisasi akses terhadap intelijen tingkat tinggi, menantang hegemoni model tertutup, dan memaksa komoditisasi token intelijen mentah secara cepat.

Reaksi Pasar dan Volatilitas Ekonomi

Reaksi pasar terhadap evolusi yang pesat ini merupakan gabungan dari investasi yang berlebihan dan skeptisisme yang bijaksana. Meskipun belanja modal (CapEx) di pusat data telah mencapai titik tertinggi dalam sejarah, investor mulai menuntut bukti 'Return on AI' (ROAI). Kami mengamati adanya 'koreksi penilaian' pada perusahaan yang hanya menyediakan wrapper AI, sementara perusahaan infrastruktur dan keamanan siber yang berspesialisasi dalam perlindungan AI mengalami pertumbuhan yang belum pernah terjadi sebelumnya. Pasar semakin memberikan penghargaan kepada perusahaan-perusahaan yang menunjukkan pengungkit operasional—kemampuan untuk meningkatkan pendapatan tanpa peningkatan jumlah karyawan yang sepadan—melalui otomatisasi berbasis AI. Pengawasan fiskal ini sehat; hal ini menyaring kebisingan spekulatif dan menyoroti perusahaan-perusahaan yang secara mendasar merekayasa ulang struktur biaya mereka melalui kecerdasan mesin.

Dampak & Perkiraan: Cakrawala 24 Bulan

Selama dua tahun ke depan, kami memperkirakan adanya transisi dari manusia yang dibantu AI ke jaringan AI yang diawasi manusia. Pada tahun 2026, kami memperkirakan munculnya 'Sistem Operasi AI Pribadi' yang mengelola kehidupan digital individu di seluruh perangkat. Di sektor perusahaan, model 'Siloed AI' akan digantikan dengan 'Interoperable Intelligence', yaitu model yang berbeda dari vendor berbeda yang berkomunikasi melalui protokol standar untuk memecahkan masalah lintas departemen. Kami juga memperkirakan akan terjadi lonjakan besar dalam Edge AI, yang mana pemrosesan dilakukan secara lokal di perangkat, bukan di cloud, didorong oleh masalah privasi dan kebutuhan akan waktu respons tanpa latensi di sektor-sektor seperti logistik otonom dan manufaktur robotik. Lingkungan peraturan juga akan semakin matang, dengan UU AI UE yang menetapkan tolok ukur global untuk penerapan yang etis, yang berpotensi memperlambat beberapa aplikasi berisiko tinggi sekaligus memberikan kerangka kerja yang stabil untuk investasi jangka panjang.

Kesimpulan: Era eksperimen AI telah berakhir; era eksekusi AI telah dimulai. Bagi perusahaan modern, tantangannya bukan lagi soal memilih model yang 'terbaik', namun soal membangun arsitektur AI yang paling tangguh dan terukur. Mereka yang berhasil mengintegrasikan teknologi ini ke dalam DNA inti mereka akan mencapai tingkat efisiensi tinggi yang sebelumnya tidak terbayangkan. Di VELOTECHNA, kami tetap yakin bahwa penyebaran intelijen secara strategis merupakan keunggulan kompetitif terbesar di abad ke-21. Peluang untuk adopsi dasar sudah tertutup; sekaranglah waktunya untuk mengambil tindakan tegas.

Sponsored

Lanjutkan dengan Word Counter

Ukur kepadatan konten analisis dan reading time.