The Edge AI Revolution: How On-Device Intelligence Is Redefining The Silicon Arms Race

VeloTechna Editorial

Observed on Jan 14, 2026

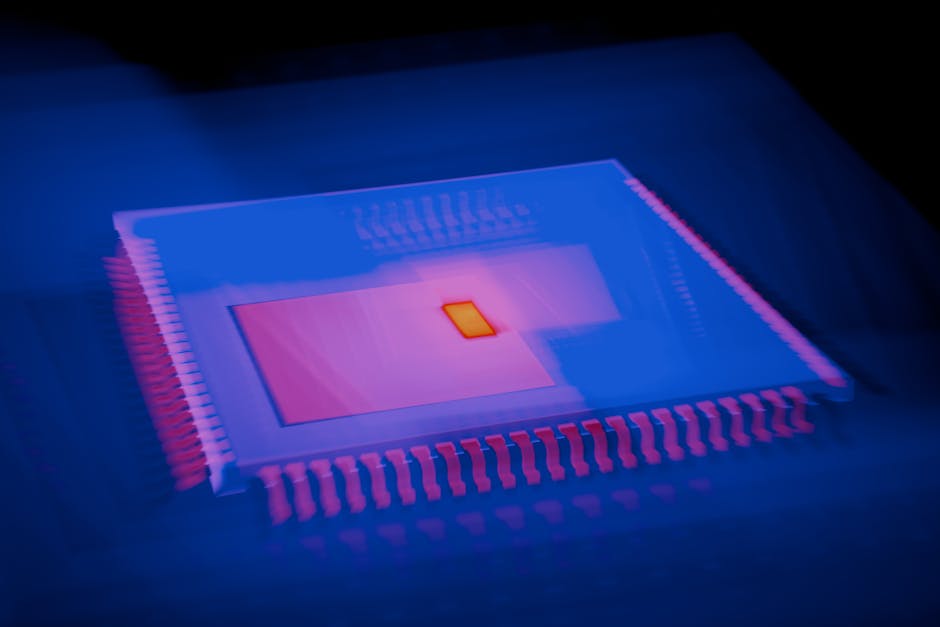

Technical Analysis Visualization

VELOTECHNA, Silicon Valley - The global technology landscape is currently undergoing a fundamental transformation, moving away from the centralized cloud computing paradigm that dominated the last decade. As we transition into an era defined by generative intelligence, the focus is shifting from massive data centers to the hardware in our pockets and on our desks. This pivot is not just a technical evolution; this is a strategic necessity driven by the need for privacy, reduced latency, and the high cost of server-side inference.

The current trajectory of the industry, as highlighted by recent strategic moves in the sector (Source), underscoring the big bet on the "Edge A.I." This refers to the ability of consumer devices to run complex Large Language Models (LLM) and diffusion models locally, without requiring a persistent internet connection or third-party server processing.

Mechanism: Engineering Local Intelligence

The technical challenges of Edge AI are enormous. To run models with billions of parameters on mobile devices, manufacturers must optimize three critical vectors: Neural Processing Unit (NPU), memory bandwidth, and thermal efficiency. Unlike traditional CPUs or GPUs, NPUs are specialized circuits designed specifically for the low-precision arithmetic required by deep learning. We're seeing a race to the top in "TOPS" (Trillions of Operations Per Second), with companies pushing the boundaries of what's possible with 5 to 10 watts of electrical power.

In addition, the bottleneck is no longer just raw computing power, but the speed of moving data from memory to the processor. This led to the implementation of high-bandwidth unified memory architectures, which allow the NPU to access the same memory pool as the CPU and GPU, thereby drastically reducing the latency of AI-based tasks such as real-time image generation and semantic text analysis.

The Players: The Tripartite Struggle for Domination

The competitive landscape is currently divided into three distinct camps. First, there are the Ecosystem Titans, like Apple, that leverage vertical integration to combine custom silicon (M and A series chips) with its own software framework. Its advantage lies in the closed loop that optimizes the user experience for tasks on privacy-critical devices.

Second, we look at Chipset Innovators, led by Qualcomm and NVIDIA. The Qualcomm Snapdragon X Elite platform represents a direct challenge to traditional PC architecture, promising superior AI performance for the Windows ecosystem. NVIDIA, while dominant in the data center, is increasingly focused on bringing RTX-accelerated AI capabilities to high-end laptops, targeting the creator and developer market.

Finally, there are Legacy Architects—Intel and AMD. Both are racing to integrate NPUs into their standard x86 architecture to prevent irrelevance in a market that is rapidly moving towards ARM-based efficiency. The struggle here is between legacy support versus modern optimization.

Market Reaction: Latency Assessment

The market response to these changes was cautious optimism followed by aggressive capital reallocation. Investors are moving away from software-only AI plays and toward hardware providers that can facilitate “PC AI” and “AI Smartphone" upgrade cycle. There is growing awareness that for AI to become truly ubiquitous, it must have “always-on” and “instant response” features, which only on-device processing can provide.

Consumer sentiment has also shifted. As users become more aware of data privacy issues, the ability to process sensitive personal information locally—without having to leave the device—is becoming a premium selling point. This has created a bifurcated market, where high-end AI-enabled devices command much higher margins, while entry-level hardware without dedicated AI silicon risks becoming obsolete within a single product cycle.

Impact & Forecast: 24 Month Horizon

Over the next two years, VELOTECHNA predicts a "Great Hardware Refresh." By mid-2026, we expect 70% of all premium smartphones and 50% of professional-grade laptops to be equipped with dedicated AI silicon capable of running 10 billion parameter models at native speed. This will lead to the demise of the “chatbot” as a standalone interface, as AI becomes an invisible layer integrated into every operating system function—from predictive file management to real-time voice translation.

Furthermore, we anticipate significant changes in the cloud-to-edge ratio. While the cloud will remain the primary place to train large models, the “Economics of Inference” will continue to grow. This will significantly reduce operational costs for software companies, as they shift the computing burden to consumers' own hardware, potentially leading to a new wave of "AI-first" applications and subscription-based computing costs-free.

Conclusion

The transition to Edge AI represents one of the most significant architectural changes in the history of computing. By moving the AI “brains” from remote data centers to local silicon, the industry solves the triple threat of privacy, latency, and cost. For manufacturers, the race is on to provide the most efficient and powerful NPUs. For consumers, the reward is a more personalized, secure and responsive digital experience. At VELOTECHNA, we believe the winners of this decade will not be those who create the greatest models, but those who can effectively shrink them down until they fit in the palm of your hand.

Sponsored

Lanjutkan dengan Keyword Suggestions

Cari keyword turunan dari topik artikel ini.